Why did I decide to apply QCA to sampling in the first place?

In 2017, as a part of a team of specialists [1], I evaluated an FAO strategic programme consisting of some 700 projects amounting to USD585 million [2]. The evaluation aimed at determining FAO’s contribution to food security and nutrition policies in 167 countries of intervention. As it often happens, available human and financial resources assigned for this evaluation did not allow us to visit a substantial proportion of these countries for a detailed evaluation of projects. Therefore, the evaluation team sought a sampling method that would allow maximizing heterogeneity of programme countries at the lowest possible cost (low number of case studies). Qualitative Comparative Analysis (QCA) appeared appropriate for the defined goal.

What is QCA?

QCA is a social sciences method introduced for the first time by Charles Ragin in 1987. It is a research approach that helps describe and analyze complex causal pathways and interactions among causal factors. At the same time, QCA is an analytical technique, which finds empirical patterns in data, an aspect very similar to quantitative variable-oriented techniques of data analysis [3]. The particularity of QCA, therefore, is that it combines the depth of qualitative information with the rigor of quantitative methods, and allows the process and findings to be replicated and generalized to other contexts [4]. While it has been widely used in the academic world, the method has only recently been applied to evaluation. Among useful resources on QCA for evaluation is Barbara Befani’s guide on evaluating development interventions with qualitative comparative analysis [5]. BetterEvaluation also provide some references on the application of QCA in evaluation [6].

How did we apply QCA to sampling in the evaluation?

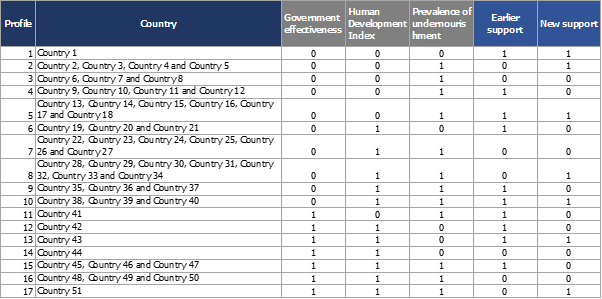

We applied QCA to sampling in evaluation firstly by identifying an “outcome of interest” specific to our research. In the case of the FAO evaluation in question, the outcome of interest was defined as “member countries substantially improved policies, laws and regulations and allocation of funds for eradication of hunger, food insecurity and malnutrition.” As a second step, we created a working hypothesis regarding the factors that influence the outcome and FAO’s performance and results at country level, by means of an in-depth desk review, preliminary interviews with key informants and the evaluation Theory of Change. These factors included external outcome-enabling factors, the wider development context, and FAO internal outcome-enabling factors related to FAO programmes grouped into “earlier support” and “new support”. The development context data was initially collected with the scales assigned by the data source, while FAO-specific data was carefully compared across countries and qualitatively assigned a score or a benchmark (“calibration”). All the factor scales were further calibrated and categorized using a binary scale of “present” or “absent,” – the technique known as a crisp-set QCA. We then ran the QCA procedure and all factors were presented in a Truth Table with a binary to allow grouping of countries into profiles sharing the same combination of factors (Table 3). The analysis identified 17 different country profiles, out of which a sample of 9 country case studies were selected (for more details on the process please refer to the methodological note).

Main challenges

While the application of the QCA technique was used to provide additional rigor to the evaluation and help maximize the diversity of programme countries at the lowest possible cost, the technique has its challenges and limitations.

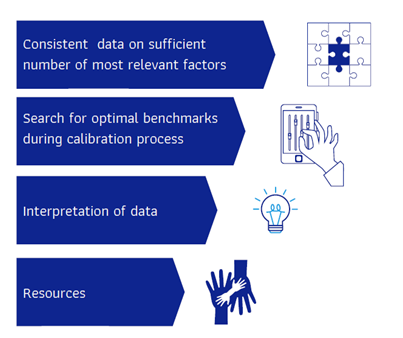

- Consistent data on sufficient number of most relevant factors

While selecting and collecting data on factors influencing the outcome, it is important to ensure consistent data with no gaps. In theory-based evaluations, it is suggested to rely on a strong and detailed Theory of Change for more precise selection of relevant factors. Being overambitious in our research, we initially collected data on a multitude of factors and eventually discarded some of them due to data gaps for some countries. Then we needed to prioritize certain factors over others or further aggregate them to have a manageable number of combinations in a final Truth Table. Revisiting the Theory of Change constructed for the evaluation was useful in this task.

- Search for optimal benchmarks during calibration process

The calibration process of the data sets and the synthetization of the qualitative data into scores in QCA removes some of the details and nuances from qualitative information. To reflect practical decision-making realities while assigning scales to factors, we used a “crisp-set” QCA technique. Assigning the optimal criteria defining the presence or absence of a particular factor presented a challenge for our team as well. We tried multiple options and collected the views of relevant stakeholders to reduce subjectivity. For this reason, “fuzzy set” QCA allowing multiple scales during the calibration process provides more flexibility and therefore, in some instances, might be considered as more appropriate as opposed to the crisp-set QCA that applies a binary scale.

- Interpretation of results

While consulting the results of the analysis in order to assign country visits according to profiles, it was essential to continually refer to the original data to avoid some possible pitfalls in its interpretation due to the above-mentioned synthetization of qualitative data during the calibration process.

- Resources

It is important to assign sufficient time and human resources for sampling through QCA. It took about two months for three FAO team members to collect the data, run the analysis and interpret its results. In addition, financial resources should be considered. The results of the analysis identified nine different profiles out of which countries should have been selected. However, the evaluation budget allowed visiting only six countries while the remaining three countries were assigned to desk country case studies.

For all these reasons, it may be necessary for a wider range of sampling strategies to be considered depending on the particular research agenda, evaluation questions, characteristics of programmes and resources available to conduct the evaluation.

QCA sampling and the wider evaluation community

In 2018, I highlighted the main takeaways from applying QCA to sampling while presenting the results at the session for young and emerging evaluators during the European Evaluation Society (EES) biennial conference in Thessaloniki. The feedback gathered from experts and experienced evaluators on the presentation was particularly important for me as it allowed a discussion on the perceived benefits and limitations of the method identified during my first experience of applying QCA to sampling.

The Thessaloniki discussion continued during the United Nations Evaluation Group (UNEG) week in Nairobi in May 2019 where one of the evaluation practice exchange sessions on methods provided the stage for a further debate of the application of QCA, including to sampling. This discussion further expanded on the challenges and lessons learnt originally identified in the presentation I delivered in Thessaloniki.

While certainly not a panacea in every situation, I believe the application of QCA to sampling has added to the toolbox of current and prospective evaluators and we will continue hearing about it over the course of the next few years.

—————————-

The blog is based on the methodological note presented at the EES Conference 2018 in Thessaloniki, accessible here:

More FAO evaluations could be consulted here: http://www.fao.org/evaluation/en/

________________________________

[1] The three FAO internal evaluators were Olivie Cossee, Senior Evaluation Officer; Veridiana Mansour Mendez, Evaluation Officer; and Alena Lappo, Evaluation Consultant [2] Evaluation of FAO Strategic Objective 1: Contribute to the eradication of hunger, food insecurity and malnutrition. FAO. Rome. 2018. http://www.fao.org/3/I9572EN/i9572en.pdf [3] Wagemann C., and Schneider C.Q. Standards of Good Practices in Qualitative Comparative Analyais (QCA) and Fuzzy-Sets. Comparative Sociology, Volume 9, Issue 3, p. 397 – 418. 2010. [4] Befani B. Pathways to change: evaluating development interventions with qualitative comparative analysis (QCA). EBA. 2016 [5] Befani B. Pathways to change: evaluating development interventions with qualitative comparative analysis (QCA). EBA. 2016 [6] https://www.betterevaluation.org/en/evaluation-options/qualitative_comparative_analysis