David Martyn, PhD Researcher at University College Dublin and Education Researcher at Plan International Ireland, argues that the Aristotelian concept of Phronesis can help develop a more values-based approach to evaluation.

What is the point of an evaluation? Should we be narrowly focused on assessing what did or did not work in a given intervention and what its impact has been? Are we losing sight of that which we value in our approaches to evaluation? This post will explore these issues through the idea of phronesis and a focus on values.

What is Phronesis?

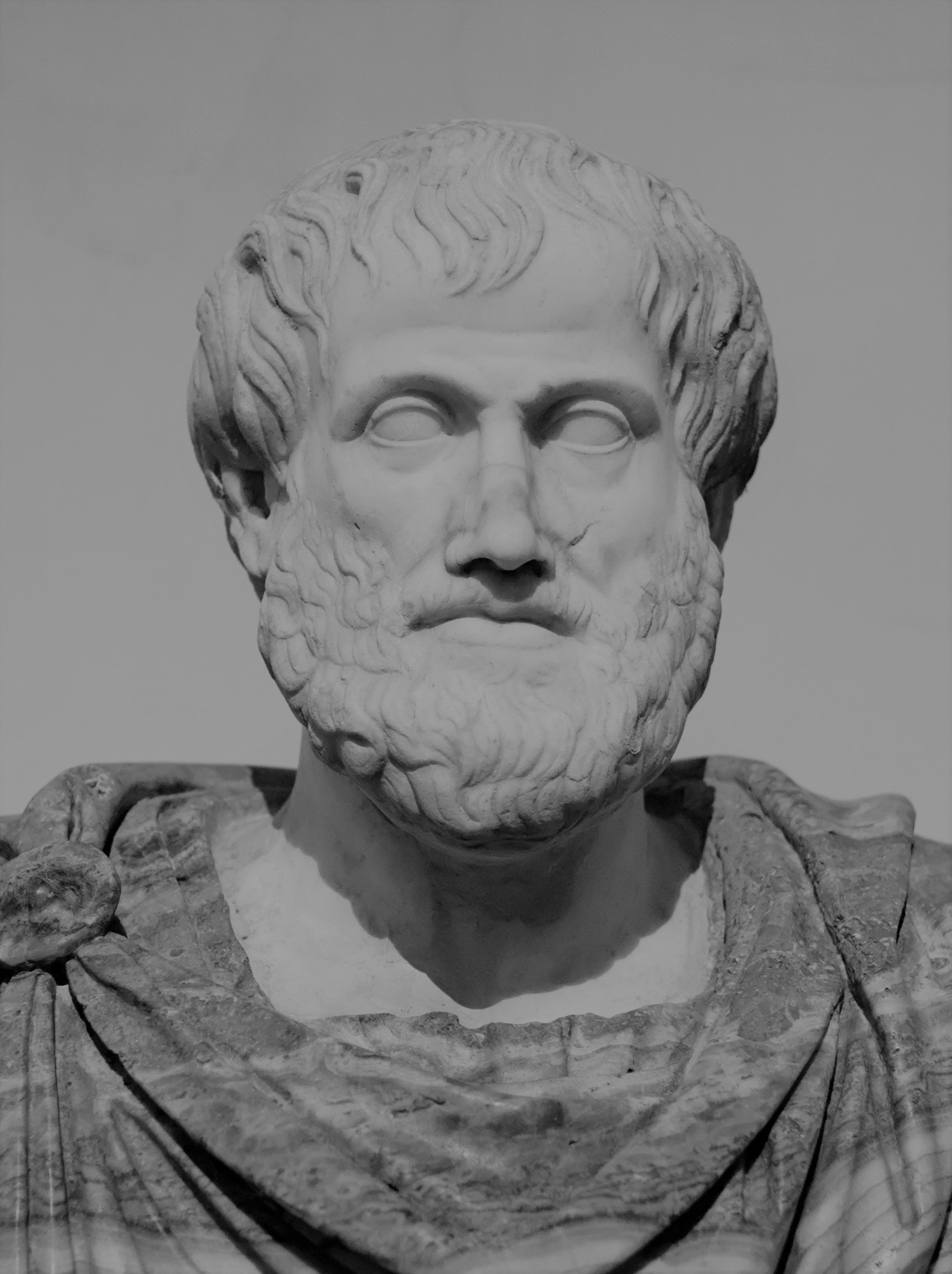

The idea of phronesis is quite an ancient one. It is derived from Aristotle and is explained in Book VI of Nicomachean Ethics. It is one of three forms of knowledge, along with episteme and techne. Episteme refers to codified, universal, scientific knowledge. An example would be scientific laws, such as the law of thermodynamics. Techne refers to knowledge related to craft or skill. An example of techne would be a cabinet maker’s skill in making furniture.

Phronesis itself is practical knowledge, wisdom, or experiential judgement. Aristotle describes it as ‘a true and reasoned state of capacity to act with regards to the things that are good or bad for man’. It is a type of knowledge that we all use every day, derived from our lived experiences, but with a focus on value judgements and the common good. It is concerned with why we do something.

It has received attention in the social sciences over the past couple of decades mainly through the work of the Oxford professor Bent Flyvbjerg. His work uses phronesis to develop an alternative approach to conducting social science research. He critiqued and moved away from what he saw as the failures of a social science which tried to emulate the natural sciences in its approach. His phronetic social science places importance on what is particular and context-dependant over what is universal. It is also a largely normative way of conducting social science, concerned with questions of what should be, and therefore placing a heavier emphasis on values. To date, however, it has not received much attention in the field of evaluation, particularly in international development, the field I work in.

Why Phronesis?

An important question to ask is why adopt a phronetic approach to evaluation? My own interest has emerged out of the frustrations I felt working in the area of Monitoring & Evaluation within international development, particularly education, over the past eight years. These frustrations can best be summed up by reference to the dominant methodology. Goals, targets and indicators ruled and it was my job to determine the degree to which they were (or were not) met. There was little room for considerations of values or morality. Instrumental rationality, in which the ends are more important than the means, was the norm. In essence, episteme is prized with little (or no) room for phronesis. This is epitomised by the dominant technocratic and approaches such as Results-based Management (RBM) and Randomised Control Trials (RCTs). To illustrate this side-lining of phronesis, the researcher Kevin Donovan has noted that there is no to promote wisdom, judgement, intuition and common sense in international aid.

It is important to note that the fields we, as evaluators, work in are largely normative and humanistic endeavours. They are concerned with critically important areas: for example, development and humanitarianism, health, education, and welfare. However, a technical, rational approach tries to erase or hide these humanistic underpinnings, often through the pursuit of objectivity. Even though, our values and ideologies are inherently embodied within the tools we use for both monitoring and evaluation. For when we decide to measure something, we are assigning value to it. We can observe detrimental effects from this trend. The academic Steffen Mau offers an illustration. Making what we value objective through quantification and, often, ranking risks turning qualitative differences into quantitative inequalities. The PISA rankings are a case in point. Qualitatively different education systems are evaluated and ranked according to how they perform in standardised tests. Higher ranked countries are seen as ‘better’ when compared to those below them, while what is particular to a given country’s education system is ignored.

A Phronetic Approach to Evaluation

So, what would a phronetic approach to evaluation entail? I will explore this in relation to the following two interrelated aspects: evidence and values.

In terms of evidence, the favouring of episteme means that certain forms are prized above others: quantitative, comparative, codified, ranked. This is often justified through claims to objectivity and methodological strength. However, this has been criticised as a form of misplaced precision. Furthermore, evaluations can sometimes seek epistemic closure, particularly if they are RCTs. This means that what is produced can often be presented as the ultimate answer to the evaluation questions. The evidence generated by a phronetic approach would be recognised as highly context-dependent and partial. It displays similarities with a realist approach to evaluation in this regard. If evaluations are learning opportunities then a phronetic approach would seek to provide evidence to inform guiding principles rather than simply ‘what worked’.

It should be noted that I am advocating an integration of phronesis with episteme and techne, not a replacement. Codified systems of knowledge are important, especially in the pursuit of truth. They can help to identify what forms of evidence we should value in the contexts we are working. In this way, a phronetic approach is working at the interface between the universal and the particular.

In terms of values, integrating phronesis entails engaging with applied ethics. In order to use our wisdom, common sense, or experiential judgement we must have a values base. Therefore, a phronetic approach would mean placing more importance on values rather than narrow forms of evidence for guidance and decision-making. This means that episteme or codified knowledge would be in service to a values-based approach. In this, it would prize value rationality above instrumental rationality. To further explain, it may be useful to refer to a 1942 C. S. Lewis essay entitled ‘First and Second Things’. In it, he states that if you place priority on that which you value less (second things) over that which you value more (first things) then you risk losing both. This would point to an evaluation approach which priorities phronesis, practical wisdom and the recognition of values over narrow, technical knowledge (episteme).

An important consideration in relation to values is to consider who’s values count and the associated risk of bias. It is still important to adopt an independent, impartial standpoint to make sense of context dependent narratives. An awareness of one’s biases and positionality is useful. Otherwise, we risk being bogged down by relativist notions of truth. Judgemental rationality grounded in values can also help to overcome this. This would display a willingness to judge between forms of truth and place value on good methodology.

Conclusion

In summing up, it may be useful to consider the questions that a phronetic approach to evaluation would seek to answer. The dominant question is often ‘what works?’ A more bounded question is often ‘to what extent did this intervention achieve its objectives?’ with a consideration of internal and external factors. A realist approach asks ‘what works, for whom, under what circumstances?’ A phronetic approach to evaluation would ask questions such as:

- Was this the right intervention to undertake?

- Did its implementation accord with the values of the people and organisations involved?

In accordance with C.S. Lewis, this would allow us to put first things first and second things second, while retaining both.

*David Martyn is an Irish Research Council funded Employment Based PhD Researcher at University College Dublin and Education Researcher with Plan International Ireland.

The opinions expressed in this article are the author’s own and do not necessarily reflect those of the European Evaluation Society.